Yelp Reviews Trends Analyses and Forecast

Analyzed trends in review count, review sentiment, and ratings over time, and build a gradient boosting regressor model to evaluate the impact of a review on the future rating of businesses.

Brief description

This project uses a time-series approach to analyze trends in yelp reviews count, ratings, and sentiment. Based on the overall trend of these review characteristics, I built a gradient boosting regressor model to evaluate the impact of a review on future ratings of businesses. This project uses the publicly available yelp review dataset.

Motivation

Good reputation often contributes to the success of businesses. In this Internet era, online reviews are playing an increasingly important role in the forming of a business’s reputation. Existing reviews could influence and bias how future customers form their opinion or impression about a business. A glowing review might attract people who are not initially interested, while a negative review could deter potential clients from patronizing. Just how much is that power?

Data selection

I selected businesses and reviews from the yelp dataset mainly based on two considerations: 1) the length of review history, and 2) the number of reviews available. I need to find businesses with a sufficient amount of reviews left over a large enough period of time. A business with 8+ years of review history but only 10 reviews would not be ideal and would limit the type of analyses I can perform. Therefore, I first chose to filter out businesses with at least 2 years of review history, and then selected businesses whos total review count is above the 90th percentile of the review count distribution. This left me with 13,814 businesses and 4,438,241 reviews to work with. I further cleaned the reviews to exclude non-English reviews. The final dataset I used contains 13,814 restaurants and 4,337,534 reviews.

Trend of yelp reviews

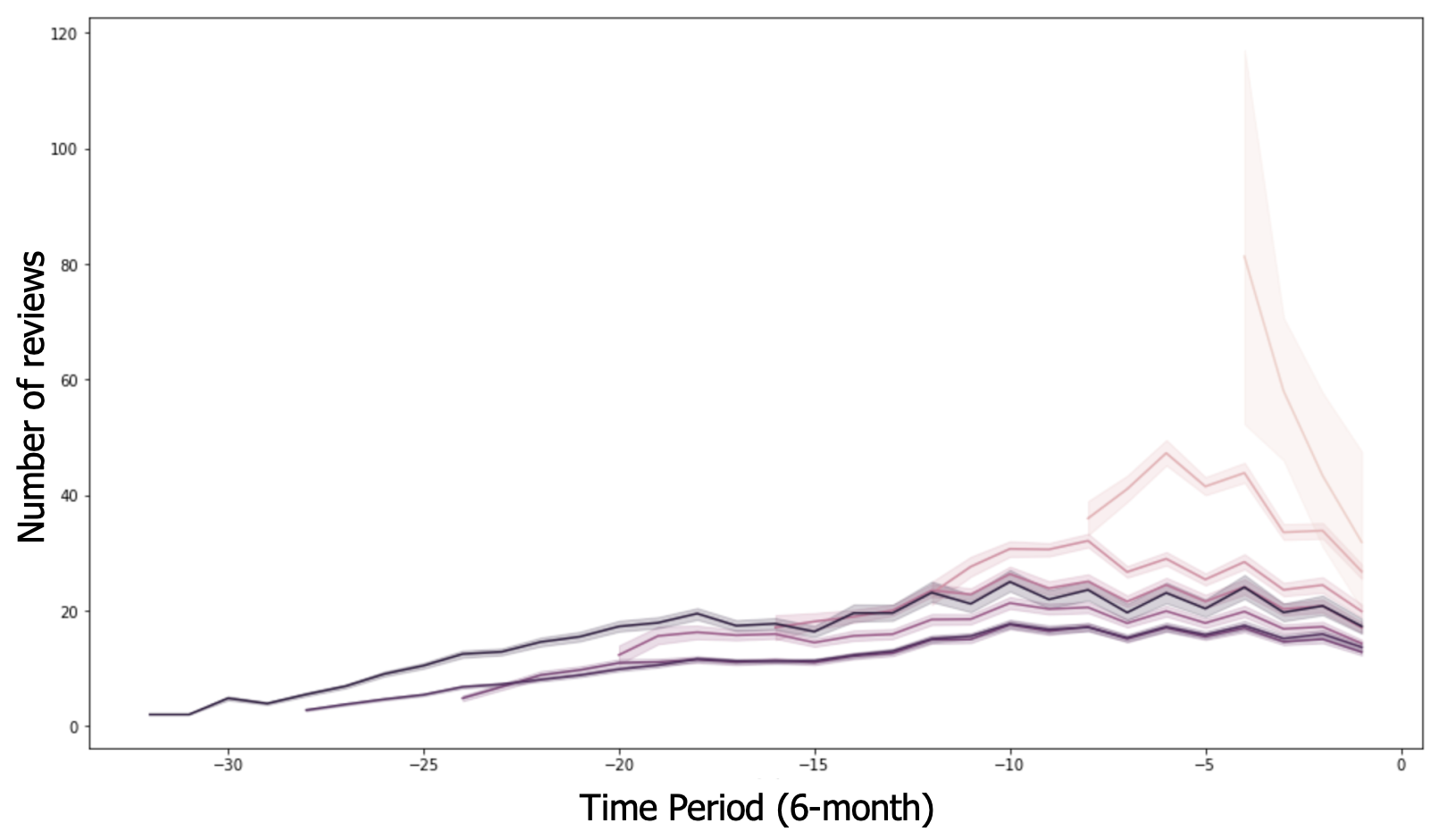

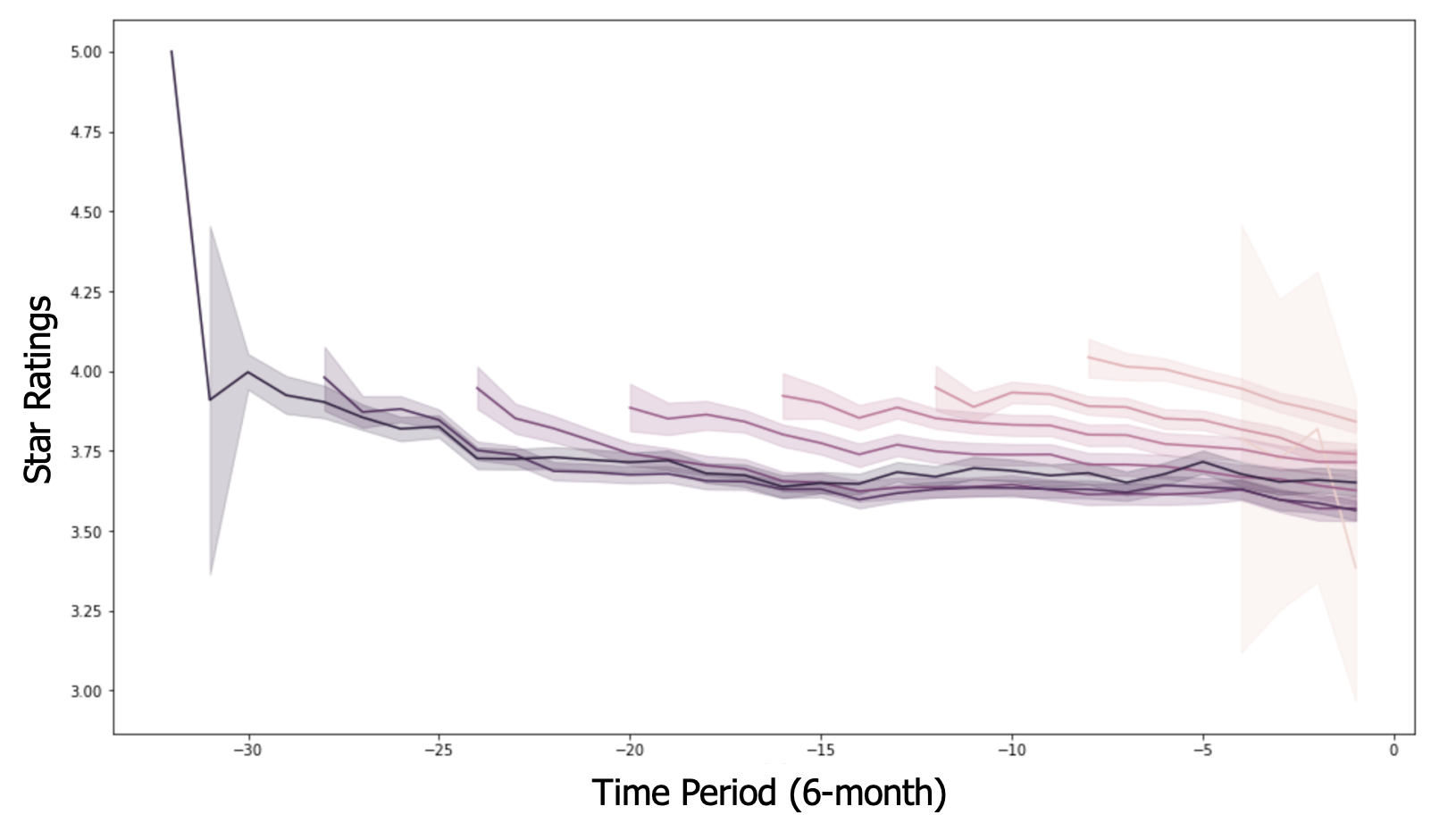

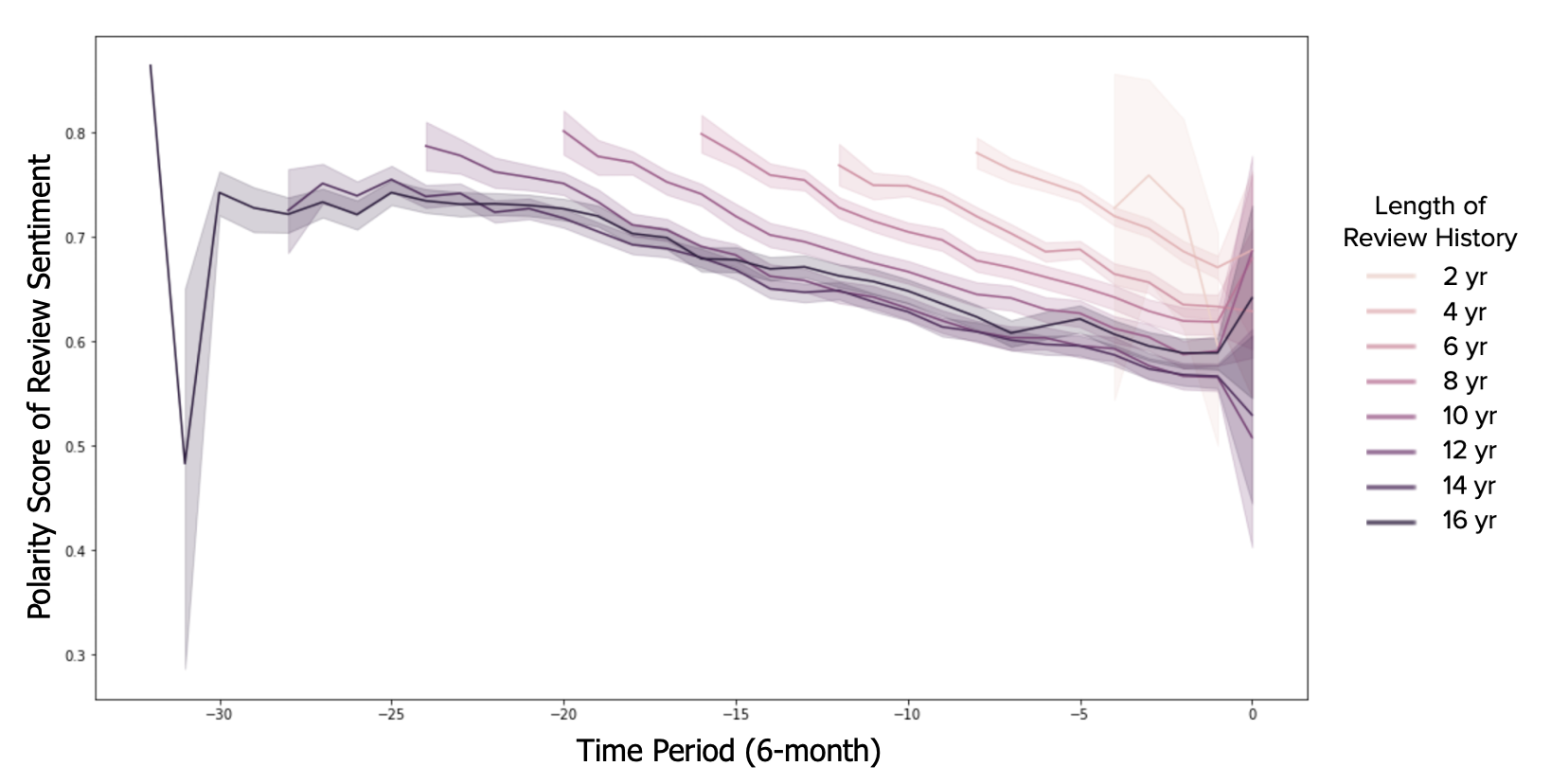

The yelp dataset does not have information on the opening date of businesses. Therefore, I am only able to retrospectively analyze the reviews, lining them up by their most recent reviews and backtrack from there. As a result, I segmented the reviews retrospectively with a six-month time window and analyzed the trends of yelp reviews from the following three aspects: 1) number of reviews received, 2) average star rating, and 3) the polarity of review sentiment.

In general, the review count increases for restaurants, and then slowly plateaus followed by a slight decrease. There are some minor differences within this general trend. For example, restaurants that started about 16 years ago had a slow increase in review counts initially, which most probably corresponds with the increase of Yelp users as a whole. More recent businesses have a greater initial increase in review count which could be because most new restaurants get a large influx of customers in their opening phase.

As for the trend of average review ratings, most businesses converge to an average that ranges from 3.5 to 4 stars. It’s interesting to see that almost all groups start at a rating of about 4 stars. Businesses could receive better review ratings soon after opening because they’re more dedicated to go above and beyond in order to capture a market share in the beginning, or people are just excited to see a new place and thereby think better of it. As the businesses establish themselves, the ratings then trend downwards, which could be due to a greater influx of customers and thus more diverse ratings, or the excitement of new businesses weaning off. Either way, they all seem to follow the same trend of convering to a stabilizing average of 3.5 stars.

The trend of the review sentiment as reflected by the polarity score follows the same trend as the average star ratings. Reviews in general are positive, with a more positive outlook from customers in the earlier reviews. The positivity in the sentiment slowly decreases as times goes by, which could again be due to more diverse opinions brought by more customers or the decreased enthusiasm from customers.

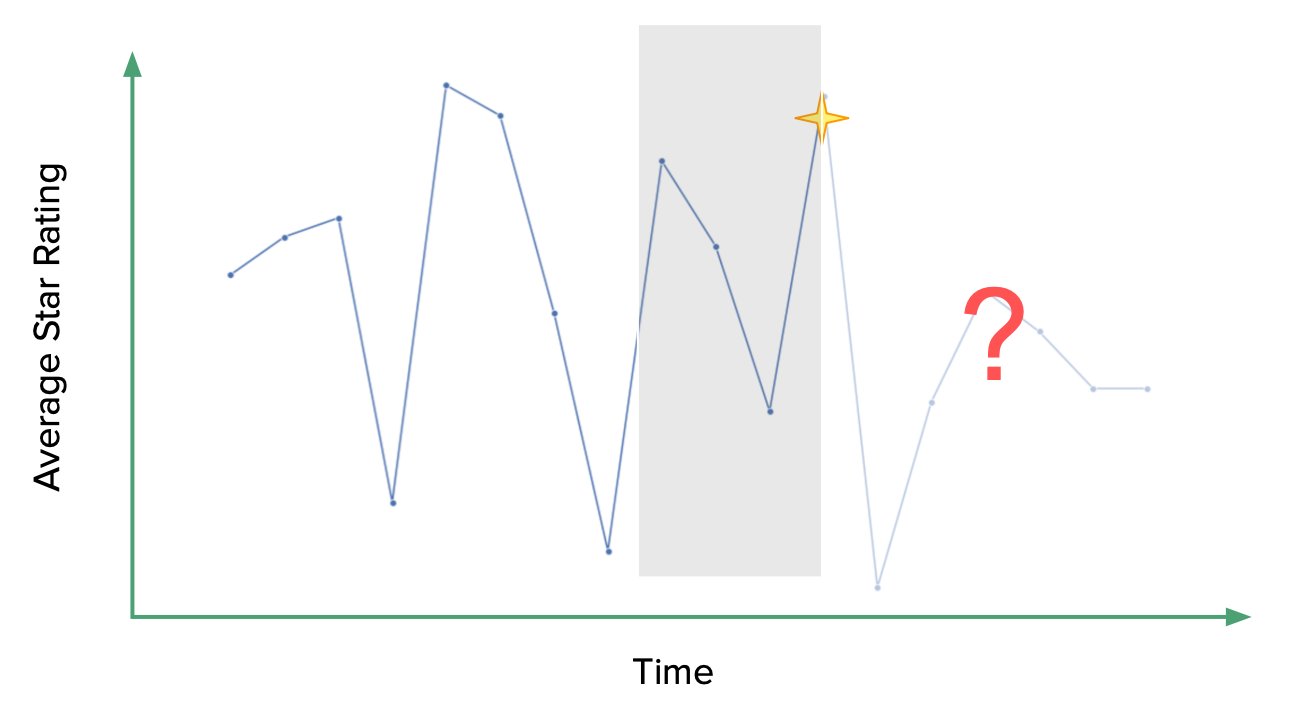

Modeling the future impact of a review

Going a step further, I am interested to see whether it is possible to predict the future ratings of a restaurant based on a given review or the previous review trends.

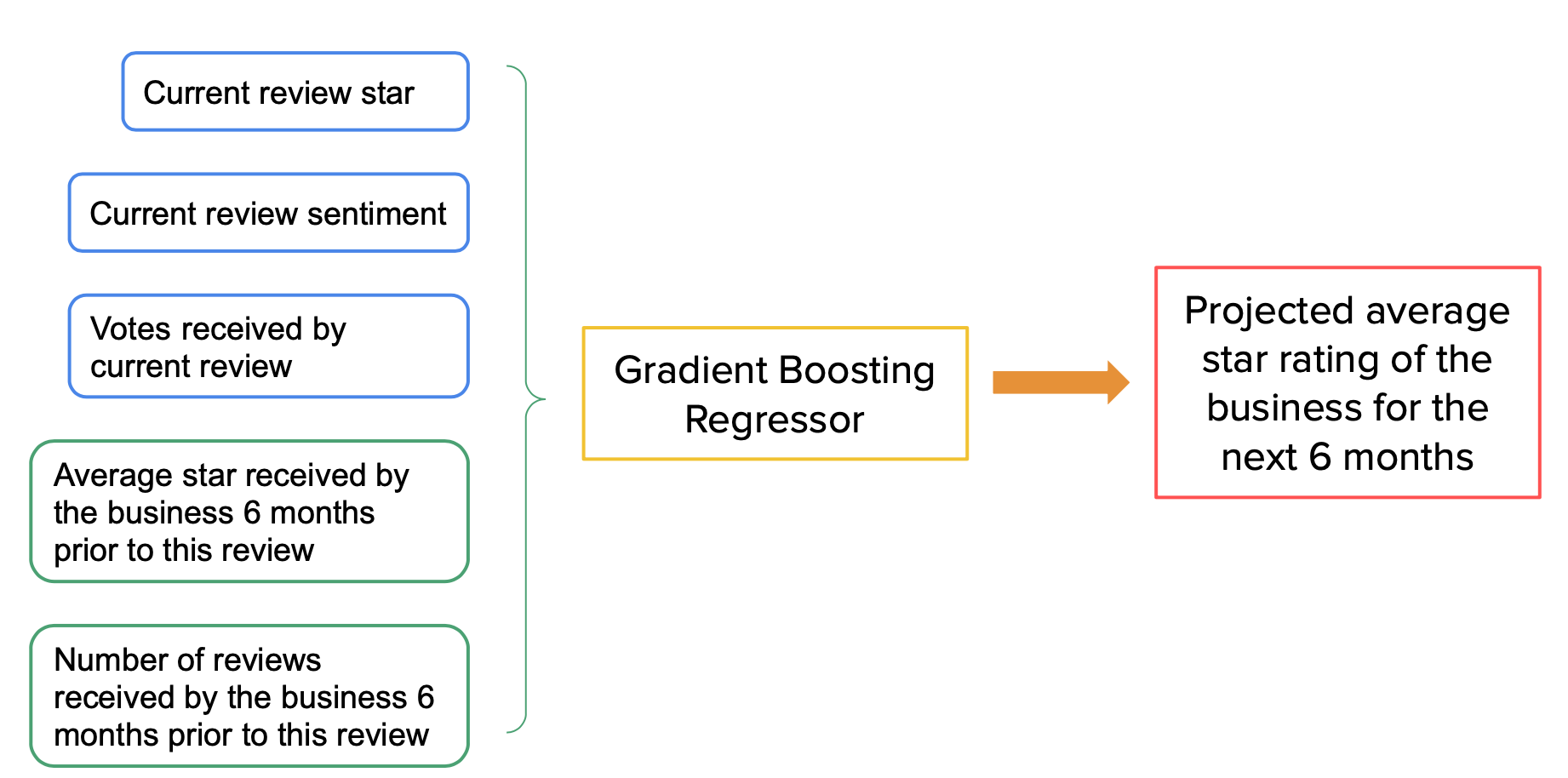

Features

Specifically, the features I used were 1) the star rating of the given review, 2) the positive, negative, neutral, and compound sentiment of the given review analyzed using NLTK library, 3) votes received by the current review, 4) number of reviews by the business received in the previous 6 months, and 5) the average star rating of the business in the previous 6 months.

Model performance

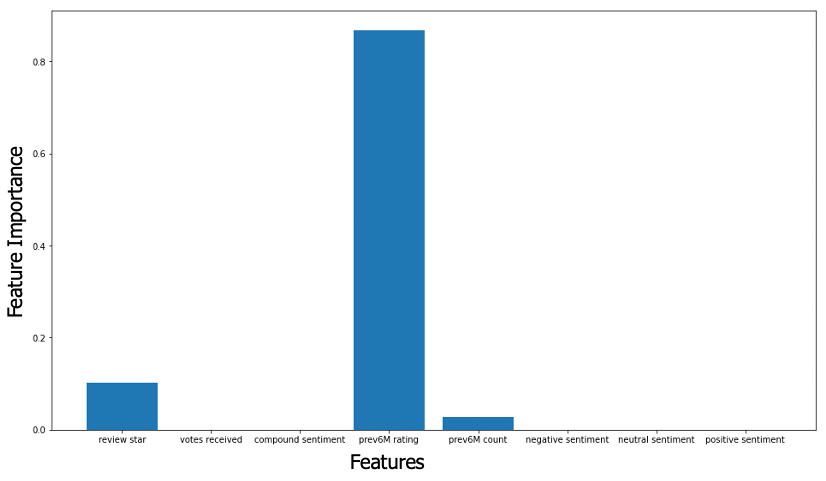

This gradient boosting regression model has a mean R² of 0.62 with a 5-fold cross validation. It is good enough to explain 62% of the variance. Below is a figure summarizing feature importance. The average star ratings of previous 6 months is the most important feature. This finding is not surprising given ratings tend to converge over time as observed in the overall trend of reviews.

Concluding remarks

I built a gradient boosting regressor to predict the average rating of a restaurant in the next 6-month based on a given review and the restaurant’s past review trend. Based on the result of the GBR model, the average rating of a restaurant in the past six month is the most impactful in predicting its future rating. There does not seem to be a “golden review” that is able to drastically change the rating of a restaurant.

Of course, this model is not perfect. One limitation is that the target feature (average rating of next 6 months of reviews) of the training data might not be super accurate, especially for restaurants with a shorter review history. Another limitation is that I modeled previous rating features and the future rating target feature using a time window of 6 months, which is relatively long. It is likely that this model might perform better if segmenting this data using a shorter time window like 3 months.

Additional elements/features to explore include:

- Incorporating additional features related to the reviewer, the subtype of the businesses, and other more detailed information of the review such as if the review is made in summer or winter

- Testing different time windows when segmenting the review

- Comparing the accuracy of the model between forecasting the future short-term ratings, e.g. 1-3 months, and long-term ratings, 6-12 months